Search Results for author: Jun-Yan Zhu

Found 75 papers, 62 papers with code

Aligning and Projecting Images to Class-conditional Generative Networks

no code implementations • ECCV 2020 • Minyoung Huh, Richard Zhang, Jun-Yan Zhu, Sylvain Paris, Aaron Hertzmann

We present a method for projecting an input image into the space of a class-conditional generative neural network.

Distilling Diffusion Models into Conditional GANs

no code implementations • 9 May 2024 • Minguk Kang, Richard Zhang, Connelly Barnes, Sylvain Paris, Suha Kwak, Jaesik Park, Eli Shechtman, Jun-Yan Zhu, Taesung Park

We propose a method to distill a complex multistep diffusion model into a single-step conditional GAN student model, dramatically accelerating inference, while preserving image quality.

Customizing Text-to-Image Models with a Single Image Pair

no code implementations • 2 May 2024 • Maxwell Jones, Sheng-Yu Wang, Nupur Kumari, David Bau, Jun-Yan Zhu

Both qualitative and quantitative experiments show that our method can effectively learn style while avoiding overfitting to image content, highlighting the potential of modeling such stylistic differences from a single image pair.

Customizing Text-to-Image Diffusion with Camera Viewpoint Control

no code implementations • 18 Apr 2024 • Nupur Kumari, Grace Su, Richard Zhang, Taesung Park, Eli Shechtman, Jun-Yan Zhu

Model customization introduces new concepts to existing text-to-image models, enabling the generation of the new concept in novel contexts.

On the Content Bias in Fréchet Video Distance

1 code implementation • 18 Apr 2024 • Songwei Ge, Aniruddha Mahapatra, Gaurav Parmar, Jun-Yan Zhu, Jia-Bin Huang

We show that FVD with features extracted from the recent large-scale self-supervised video models is less biased toward image quality.

One-Step Image Translation with Text-to-Image Models

1 code implementation • 18 Mar 2024 • Gaurav Parmar, Taesung Park, Srinivasa Narasimhan, Jun-Yan Zhu

In this work, we address two limitations of existing conditional diffusion models: their slow inference speed due to the iterative denoising process and their reliance on paired data for model fine-tuning.

Consolidating Attention Features for Multi-view Image Editing

no code implementations • 22 Feb 2024 • Or Patashnik, Rinon Gal, Daniel Cohen-Or, Jun-Yan Zhu, Fernando de la Torre

In this work, we focus on spatial control-based geometric manipulations and introduce a method to consolidate the editing process across various views.

FlashTex: Fast Relightable Mesh Texturing with LightControlNet

no code implementations • 20 Feb 2024 • Kangle Deng, Timothy Omernick, Alexander Weiss, Deva Ramanan, Jun-Yan Zhu, Tinghui Zhou, Maneesh Agrawala

We introduce LightControlNet, a new text-to-image model based on the ControlNet architecture, which allows the specification of the desired lighting as a conditioning image to the model.

Holistic Evaluation of Text-To-Image Models

1 code implementation • NeurIPS 2023 • Tony Lee, Michihiro Yasunaga, Chenlin Meng, Yifan Mai, Joon Sung Park, Agrim Gupta, Yunzhi Zhang, Deepak Narayanan, Hannah Benita Teufel, Marco Bellagente, Minguk Kang, Taesung Park, Jure Leskovec, Jun-Yan Zhu, Li Fei-Fei, Jiajun Wu, Stefano Ermon, Percy Liang

The stunning qualitative improvement of recent text-to-image models has led to their widespread attention and adoption.

Dense Text-to-Image Generation with Attention Modulation

1 code implementation • ICCV 2023 • Yunji Kim, Jiyoung Lee, Jin-Hwa Kim, Jung-Woo Ha, Jun-Yan Zhu

To address this, we propose DenseDiffusion, a training-free method that adapts a pre-trained text-to-image model to handle such dense captions while offering control over the scene layout.

Text-Guided Synthesis of Eulerian Cinemagraphs

1 code implementation • 6 Jul 2023 • Aniruddha Mahapatra, Aliaksandr Siarohin, Hsin-Ying Lee, Sergey Tulyakov, Jun-Yan Zhu

We introduce Text2Cinemagraph, a fully automated method for creating cinemagraphs from text descriptions - an especially challenging task when prompts feature imaginary elements and artistic styles, given the complexity of interpreting the semantics and motions of these images.

Evaluating Data Attribution for Text-to-Image Models

1 code implementation • ICCV 2023 • Sheng-Yu Wang, Alexei A. Efros, Jun-Yan Zhu, Richard Zhang

The problem of data attribution in such models -- which of the images in the training set are most responsible for the appearance of a given generated image -- is a difficult yet important one.

Controllable Visual-Tactile Synthesis

1 code implementation • ICCV 2023 • Ruihan Gao, Wenzhen Yuan, Jun-Yan Zhu

Deep generative models have various content creation applications such as graphic design, e-commerce, and virtual Try-on.

Generalizing Dataset Distillation via Deep Generative Prior

2 code implementations • CVPR 2023 • George Cazenavette, Tongzhou Wang, Antonio Torralba, Alexei A. Efros, Jun-Yan Zhu

Dataset Distillation aims to distill an entire dataset's knowledge into a few synthetic images.

Total-Recon: Deformable Scene Reconstruction for Embodied View Synthesis

1 code implementation • ICCV 2023 • Chonghyuk Song, Gengshan Yang, Kangle Deng, Jun-Yan Zhu, Deva Ramanan

Given a minute-long RGBD video of people interacting with their pets, we render the scene from novel camera trajectories derived from the in-scene motion of actors: (1) egocentric cameras that simulate the point of view of a target actor and (2) 3rd-person cameras that follow the actor.

Expressive Text-to-Image Generation with Rich Text

no code implementations • ICCV 2023 • Songwei Ge, Taesung Park, Jun-Yan Zhu, Jia-Bin Huang

For each region, we enforce its text attributes by creating region-specific detailed prompts and applying region-specific guidance, and maintain its fidelity against plain-text generation through region-based injections.

Ablating Concepts in Text-to-Image Diffusion Models

1 code implementation • ICCV 2023 • Nupur Kumari, Bingliang Zhang, Sheng-Yu Wang, Eli Shechtman, Richard Zhang, Jun-Yan Zhu

To achieve this goal, we propose an efficient method of ablating concepts in the pretrained model, i. e., preventing the generation of a target concept.

Scaling up GANs for Text-to-Image Synthesis

1 code implementation • CVPR 2023 • Minguk Kang, Jun-Yan Zhu, Richard Zhang, Jaesik Park, Eli Shechtman, Sylvain Paris, Taesung Park

From a technical standpoint, it also marked a drastic change in the favored architecture to design generative image models.

Ranked #18 on

Image Generation

on ImageNet 256x256

Ranked #18 on

Image Generation

on ImageNet 256x256

3D-aware Conditional Image Synthesis

2 code implementations • CVPR 2023 • Kangle Deng, Gengshan Yang, Deva Ramanan, Jun-Yan Zhu

We propose pix2pix3D, a 3D-aware conditional generative model for controllable photorealistic image synthesis.

3D-aware Blending with Generative NeRFs

1 code implementation • ICCV 2023 • Hyunsu Kim, Gayoung Lee, Yunjey Choi, Jin-Hwa Kim, Jun-Yan Zhu

Image blending aims to combine multiple images seamlessly.

Zero-shot Image-to-Image Translation

2 code implementations • 6 Feb 2023 • Gaurav Parmar, Krishna Kumar Singh, Richard Zhang, Yijun Li, Jingwan Lu, Jun-Yan Zhu

However, it is still challenging to directly apply these models for editing real images for two reasons.

Ranked #13 on

Text-based Image Editing

on PIE-Bench

Ranked #13 on

Text-based Image Editing

on PIE-Bench

Domain Expansion of Image Generators

1 code implementation • CVPR 2023 • Yotam Nitzan, Michaël Gharbi, Richard Zhang, Taesung Park, Jun-Yan Zhu, Daniel Cohen-Or, Eli Shechtman

First, we note the generator contains a meaningful, pretrained latent space.

Multi-Concept Customization of Text-to-Image Diffusion

2 code implementations • CVPR 2023 • Nupur Kumari, Bingliang Zhang, Richard Zhang, Eli Shechtman, Jun-Yan Zhu

Can we teach a model to quickly acquire a new concept, given a few examples?

Efficient Spatially Sparse Inference for Conditional GANs and Diffusion Models

1 code implementation • 3 Nov 2022 • Muyang Li, Ji Lin, Chenlin Meng, Stefano Ermon, Song Han, Jun-Yan Zhu

With about $1\%$-area edits, SIGE accelerates DDPM by $3. 0\times$ on NVIDIA RTX 3090 and $4. 6\times$ on Apple M1 Pro GPU, Stable Diffusion by $7. 2\times$ on 3090, and GauGAN by $5. 6\times$ on 3090 and $5. 2\times$ on M1 Pro GPU.

Content-Based Search for Deep Generative Models

1 code implementation • 6 Oct 2022 • Daohan Lu, Sheng-Yu Wang, Nupur Kumari, Rohan Agarwal, Mia Tang, David Bau, Jun-Yan Zhu

To address this need, we introduce the task of content-based model search: given a query and a large set of generative models, finding the models that best match the query.

Ranked #1 on

Model Description Based Search

on Generative Models

Ranked #1 on

Model Description Based Search

on Generative Models

Contrastive Learning

Contrastive Learning

Image and Sketch based Model Retrieval

+4

Image and Sketch based Model Retrieval

+4

Rewriting Geometric Rules of a GAN

1 code implementation • 28 Jul 2022 • Sheng-Yu Wang, David Bau, Jun-Yan Zhu

Our method allows a user to create a model that synthesizes endless objects with defined geometric changes, enabling the creation of a new generative model without the burden of curating a large-scale dataset.

Spatially-Adaptive Multilayer Selection for GAN Inversion and Editing

1 code implementation • CVPR 2022 • Gaurav Parmar, Yijun Li, Jingwan Lu, Richard Zhang, Jun-Yan Zhu, Krishna Kumar Singh

We propose a new method to invert and edit such complex images in the latent space of GANs, such as StyleGAN2.

Dataset Distillation by Matching Training Trajectories

5 code implementations • CVPR 2022 • George Cazenavette, Tongzhou Wang, Antonio Torralba, Alexei A. Efros, Jun-Yan Zhu

To efficiently obtain the initial and target network parameters for large-scale datasets, we pre-compute and store training trajectories of expert networks trained on the real dataset.

Ensembling Off-the-shelf Models for GAN Training

1 code implementation • CVPR 2022 • Nupur Kumari, Richard Zhang, Eli Shechtman, Jun-Yan Zhu

Can the collective "knowledge" from a large bank of pretrained vision models be leveraged to improve GAN training?

Ranked #1 on

Image Generation

on AFHQ Cat

Ranked #1 on

Image Generation

on AFHQ Cat

GAN-Supervised Dense Visual Alignment

1 code implementation • CVPR 2022 • William Peebles, Jun-Yan Zhu, Richard Zhang, Antonio Torralba, Alexei A. Efros, Eli Shechtman

We propose GAN-Supervised Learning, a framework for learning discriminative models and their GAN-generated training data jointly end-to-end.

Contrastive Feature Loss for Image Prediction

1 code implementation • 12 Nov 2021 • Alex Andonian, Taesung Park, Bryan Russell, Phillip Isola, Jun-Yan Zhu, Richard Zhang

Training supervised image synthesis models requires a critic to compare two images: the ground truth to the result.

Sketch Your Own GAN

1 code implementation • ICCV 2021 • Sheng-Yu Wang, David Bau, Jun-Yan Zhu

In particular, we change the weights of an original GAN model according to user sketches.

SDEdit: Guided Image Synthesis and Editing with Stochastic Differential Equations

1 code implementation • ICLR 2022 • Chenlin Meng, Yutong He, Yang song, Jiaming Song, Jiajun Wu, Jun-Yan Zhu, Stefano Ermon

The key challenge is balancing faithfulness to the user input (e. g., hand-drawn colored strokes) and realism of the synthesized image.

Depth-supervised NeRF: Fewer Views and Faster Training for Free

1 code implementation • CVPR 2022 • Kangle Deng, Andrew Liu, Jun-Yan Zhu, Deva Ramanan

Crucially, SFM also produces sparse 3D points that can be used as "free" depth supervision during training: we add a loss to encourage the distribution of a ray's terminating depth matches a given 3D keypoint, incorporating depth uncertainty.

Editing Conditional Radiance Fields

1 code implementation • ICCV 2021 • Steven Liu, Xiuming Zhang, Zhoutong Zhang, Richard Zhang, Jun-Yan Zhu, Bryan Russell

In this paper, we explore enabling user editing of a category-level NeRF - also known as a conditional radiance field - trained on a shape category.

Ranked #1 on

Novel View Synthesis

on PhotoShape

Ranked #1 on

Novel View Synthesis

on PhotoShape

Ensembling with Deep Generative Views

1 code implementation • CVPR 2021 • Lucy Chai, Jun-Yan Zhu, Eli Shechtman, Phillip Isola, Richard Zhang

Here, we investigate whether such views can be applied to real images to benefit downstream analysis tasks such as image classification.

On Aliased Resizing and Surprising Subtleties in GAN Evaluation

3 code implementations • CVPR 2022 • Gaurav Parmar, Richard Zhang, Jun-Yan Zhu

Furthermore, we show that if compression is used on real training images, FID can actually improve if the generated images are also subsequently compressed.

Anycost GANs for Interactive Image Synthesis and Editing

1 code implementation • CVPR 2021 • Ji Lin, Richard Zhang, Frieder Ganz, Song Han, Jun-Yan Zhu

Generative adversarial networks (GANs) have enabled photorealistic image synthesis and editing.

Understanding the Role of Individual Units in a Deep Neural Network

2 code implementations • 10 Sep 2020 • David Bau, Jun-Yan Zhu, Hendrik Strobelt, Agata Lapedriza, Bolei Zhou, Antonio Torralba

Second, we use a similar analytic method to analyze a generative adversarial network (GAN) model trained to generate scenes.

The Hessian Penalty: A Weak Prior for Unsupervised Disentanglement

1 code implementation • ECCV 2020 • William Peebles, John Peebles, Jun-Yan Zhu, Alexei Efros, Antonio Torralba

In this paper, we propose the Hessian Penalty, a simple regularization term that encourages the Hessian of a generative model with respect to its input to be diagonal.

Rewriting a Deep Generative Model

3 code implementations • ECCV 2020 • David Bau, Steven Liu, Tongzhou Wang, Jun-Yan Zhu, Antonio Torralba

To address the problem, we propose a formulation in which the desired rule is changed by manipulating a layer of a deep network as a linear associative memory.

Contrastive Learning for Unpaired Image-to-Image Translation

10 code implementations • 30 Jul 2020 • Taesung Park, Alexei A. Efros, Richard Zhang, Jun-Yan Zhu

Furthermore, we draw negatives from within the input image itself, rather than from the rest of the dataset.

Swapping Autoencoder for Deep Image Manipulation

4 code implementations • NeurIPS 2020 • Taesung Park, Jun-Yan Zhu, Oliver Wang, Jingwan Lu, Eli Shechtman, Alexei A. Efros, Richard Zhang

Deep generative models have become increasingly effective at producing realistic images from randomly sampled seeds, but using such models for controllable manipulation of existing images remains challenging.

Differentiable Augmentation for Data-Efficient GAN Training

11 code implementations • NeurIPS 2020 • Shengyu Zhao, Zhijian Liu, Ji Lin, Jun-Yan Zhu, Song Han

Furthermore, with only 20% training data, we can match the top performance on CIFAR-10 and CIFAR-100.

Ranked #1 on

Image Generation

on CIFAR-10 (20% data)

Ranked #1 on

Image Generation

on CIFAR-10 (20% data)

Diverse Image Generation via Self-Conditioned GANs

2 code implementations • CVPR 2020 • Steven Liu, Tongzhou Wang, David Bau, Jun-Yan Zhu, Antonio Torralba

We introduce a simple but effective unsupervised method for generating realistic and diverse images.

Semantic Photo Manipulation with a Generative Image Prior

1 code implementation • 15 May 2020 • David Bau, Hendrik Strobelt, William Peebles, Jonas Wulff, Bolei Zhou, Jun-Yan Zhu, Antonio Torralba

First, it is hard for GANs to precisely reproduce an input image.

Transforming and Projecting Images into Class-conditional Generative Networks

2 code implementations • 4 May 2020 • Minyoung Huh, Richard Zhang, Jun-Yan Zhu, Sylvain Paris, Aaron Hertzmann

We present a method for projecting an input image into the space of a class-conditional generative neural network.

State of the Art on Neural Rendering

no code implementations • 8 Apr 2020 • Ayush Tewari, Ohad Fried, Justus Thies, Vincent Sitzmann, Stephen Lombardi, Kalyan Sunkavalli, Ricardo Martin-Brualla, Tomas Simon, Jason Saragih, Matthias Nießner, Rohit Pandey, Sean Fanello, Gordon Wetzstein, Jun-Yan Zhu, Christian Theobalt, Maneesh Agrawala, Eli Shechtman, Dan B. Goldman, Michael Zollhöfer

Neural rendering is a new and rapidly emerging field that combines generative machine learning techniques with physical knowledge from computer graphics, e. g., by the integration of differentiable rendering into network training.

GAN Compression: Efficient Architectures for Interactive Conditional GANs

1 code implementation • CVPR 2020 • Muyang Li, Ji Lin, Yaoyao Ding, Zhijian Liu, Jun-Yan Zhu, Song Han

Directly applying existing compression methods yields poor performance due to the difficulty of GAN training and the differences in generator architectures.

Seeing What a GAN Cannot Generate

1 code implementation • ICCV 2019 • David Bau, Jun-Yan Zhu, Jonas Wulff, William Peebles, Hendrik Strobelt, Bolei Zhou, Antonio Torralba

Differences in statistics reveal object classes that are omitted by a GAN.

Connecting Touch and Vision via Cross-Modal Prediction

1 code implementation • CVPR 2019 • Yunzhu Li, Jun-Yan Zhu, Russ Tedrake, Antonio Torralba

To connect vision and touch, we introduce new tasks of synthesizing plausible tactile signals from visual inputs as well as imagining how we interact with objects given tactile data as input.

Learning the signatures of the human grasp using a scalable tactile glove

no code implementations • journal 2019 • Subramanian Sundaram, Petr Kellnhofer, Yunzhu Li, Jun-Yan Zhu, Antonio Torralba & Wojciech Matusik

Using a low-cost (about US$10) scalable tactile glove sensor array, we record a large-scale tactile dataset with 135, 000 frames, each covering the full hand, while interacting with 26 different objects.

Visualizing and Understanding GANs

no code implementations • ICLR Workshop DeepGenStruct 2019 • David Bau, Jun-Yan Zhu, Hendrik Strobelt, Bolei Zhou, Joshua B. Tenenbaum, William T. Freeman, Antonio Torralba

We present an analytic framework to visualize and understand GANs at the unit-, object-, and scene-level.

Semantic Image Synthesis with Spatially-Adaptive Normalization

26 code implementations • CVPR 2019 • Taesung Park, Ming-Yu Liu, Ting-Chun Wang, Jun-Yan Zhu

Previous methods directly feed the semantic layout as input to the deep network, which is then processed through stacks of convolution, normalization, and nonlinearity layers.

Ranked #3 on

Sketch-to-Image Translation

on COCO-Stuff

Ranked #3 on

Sketch-to-Image Translation

on COCO-Stuff

On the Units of GANs (Extended Abstract)

no code implementations • 29 Jan 2019 • David Bau, Jun-Yan Zhu, Hendrik Strobelt, Bolei Zhou, Joshua B. Tenenbaum, William T. Freeman, Antonio Torralba

We quantify the causal effect of interpretable units by measuring the ability of interventions to control objects in the output.

Visual Object Networks: Image Generation with Disentangled 3D Representation

1 code implementation • NeurIPS 2018 • Jun-Yan Zhu, Zhoutong Zhang, Chengkai Zhang, Jiajun Wu, Antonio Torralba, Joshua B. Tenenbaum, William T. Freeman

Our model first learns to synthesize 3D shapes that are indistinguishable from real shapes.

Visual Object Networks: Image Generation with Disentangled 3D Representations

1 code implementation • NeurIPS 2018 • Jun-Yan Zhu, Zhoutong Zhang, Chengkai Zhang, Jiajun Wu, Antonio Torralba, Josh Tenenbaum, Bill Freeman

The VON not only generates images that are more realistic than the state-of-the-art 2D image synthesis methods but also enables many 3D operations such as changing the viewpoint of a generated image, shape and texture editing, linear interpolation in texture and shape space, and transferring appearance across different objects and viewpoints.

Dataset Distillation

5 code implementations • 27 Nov 2018 • Tongzhou Wang, Jun-Yan Zhu, Antonio Torralba, Alexei A. Efros

Model distillation aims to distill the knowledge of a complex model into a simpler one.

GAN Dissection: Visualizing and Understanding Generative Adversarial Networks

8 code implementations • ICLR 2019 • David Bau, Jun-Yan Zhu, Hendrik Strobelt, Bolei Zhou, Joshua B. Tenenbaum, William T. Freeman, Antonio Torralba

Then, we quantify the causal effect of interpretable units by measuring the ability of interventions to control objects in the output.

Propagation Networks for Model-Based Control Under Partial Observation

1 code implementation • 28 Sep 2018 • Yunzhu Li, Jiajun Wu, Jun-Yan Zhu, Joshua B. Tenenbaum, Antonio Torralba, Russ Tedrake

There has been an increasing interest in learning dynamics simulators for model-based control.

3D-Aware Scene Manipulation via Inverse Graphics

1 code implementation • NeurIPS 2018 • Shunyu Yao, Tzu Ming Harry Hsu, Jun-Yan Zhu, Jiajun Wu, Antonio Torralba, William T. Freeman, Joshua B. Tenenbaum

In this work, we propose 3D scene de-rendering networks (3D-SDN) to address the above issues by integrating disentangled representations for semantics, geometry, and appearance into a deep generative model.

Video-to-Video Synthesis

11 code implementations • NeurIPS 2018 • Ting-Chun Wang, Ming-Yu Liu, Jun-Yan Zhu, Guilin Liu, Andrew Tao, Jan Kautz, Bryan Catanzaro

We study the problem of video-to-video synthesis, whose goal is to learn a mapping function from an input source video (e. g., a sequence of semantic segmentation masks) to an output photorealistic video that precisely depicts the content of the source video.

Generating Adversarial Examples with Adversarial Networks

10 code implementations • ICLR 2018 • Chaowei Xiao, Bo Li, Jun-Yan Zhu, Warren He, Mingyan Liu, Dawn Song

A challenge to explore adversarial robustness of neural networks on MNIST.

Spatially Transformed Adversarial Examples

3 code implementations • ICLR 2018 • Chaowei Xiao, Jun-Yan Zhu, Bo Li, Warren He, Mingyan Liu, Dawn Song

Perturbations generated through spatial transformation could result in large $\mathcal{L}_p$ distance measures, but our extensive experiments show that such spatially transformed adversarial examples are perceptually realistic and more difficult to defend against with existing defense systems.

Toward Multimodal Image-to-Image Translation

6 code implementations • NeurIPS 2017 • Jun-Yan Zhu, Richard Zhang, Deepak Pathak, Trevor Darrell, Alexei A. Efros, Oliver Wang, Eli Shechtman

Our proposed method encourages bijective consistency between the latent encoding and output modes.

High-Resolution Image Synthesis and Semantic Manipulation with Conditional GANs

20 code implementations • CVPR 2018 • Ting-Chun Wang, Ming-Yu Liu, Jun-Yan Zhu, Andrew Tao, Jan Kautz, Bryan Catanzaro

We present a new method for synthesizing high-resolution photo-realistic images from semantic label maps using conditional generative adversarial networks (conditional GANs).

Ranked #2 on

Sketch-to-Image Translation

on COCO-Stuff

Ranked #2 on

Sketch-to-Image Translation

on COCO-Stuff

Conditional Image Generation

Conditional Image Generation

Fundus to Angiography Generation

+5

Fundus to Angiography Generation

+5

CyCADA: Cycle-Consistent Adversarial Domain Adaptation

3 code implementations • ICML 2018 • Judy Hoffman, Eric Tzeng, Taesung Park, Jun-Yan Zhu, Phillip Isola, Kate Saenko, Alexei A. Efros, Trevor Darrell

Domain adaptation is critical for success in new, unseen environments.

Real-Time User-Guided Image Colorization with Learned Deep Priors

3 code implementations • 8 May 2017 • Richard Zhang, Jun-Yan Zhu, Phillip Isola, Xinyang Geng, Angela S. Lin, Tianhe Yu, Alexei A. Efros

The system directly maps a grayscale image, along with sparse, local user "hints" to an output colorization with a Convolutional Neural Network (CNN).

Light Field Video Capture Using a Learning-Based Hybrid Imaging System

1 code implementation • 8 May 2017 • Ting-Chun Wang, Jun-Yan Zhu, Nima Khademi Kalantari, Alexei A. Efros, Ravi Ramamoorthi

Given a 3 fps light field sequence and a standard 30 fps 2D video, our system can then generate a full light field video at 30 fps.

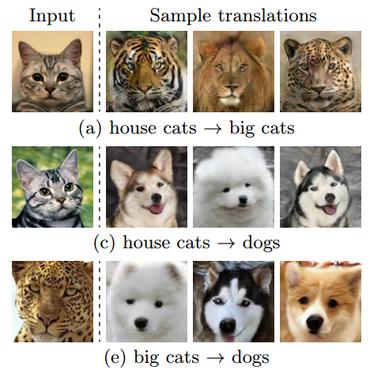

Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks

187 code implementations • ICCV 2017 • Jun-Yan Zhu, Taesung Park, Phillip Isola, Alexei A. Efros

Image-to-image translation is a class of vision and graphics problems where the goal is to learn the mapping between an input image and an output image using a training set of aligned image pairs.

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Multimodal Unsupervised Image-To-Image Translation

Multimodal Unsupervised Image-To-Image Translation

Style Transfer

+2

Style Transfer

+2

Image-to-Image Translation with Conditional Adversarial Networks

176 code implementations • CVPR 2017 • Phillip Isola, Jun-Yan Zhu, Tinghui Zhou, Alexei A. Efros

We investigate conditional adversarial networks as a general-purpose solution to image-to-image translation problems.

Generative Visual Manipulation on the Natural Image Manifold

1 code implementation • 12 Sep 2016 • Jun-Yan Zhu, Philipp Krähenbühl, Eli Shechtman, Alexei A. Efros

Realistic image manipulation is challenging because it requires modifying the image appearance in a user-controlled way, while preserving the realism of the result.

A 4D Light-Field Dataset and CNN Architectures for Material Recognition

no code implementations • 24 Aug 2016 • Ting-Chun Wang, Jun-Yan Zhu, Ebi Hiroaki, Manmohan Chandraker, Alexei A. Efros, Ravi Ramamoorthi

We introduce a new light-field dataset of materials, and take advantage of the recent success of deep learning to perform material recognition on the 4D light-field.

Learning a Discriminative Model for the Perception of Realism in Composite Images

1 code implementation • ICCV 2015 • Jun-Yan Zhu, Philipp Krähenbühl, Eli Shechtman, Alexei A. Efros

What makes an image appear realistic?

MILCut: A Sweeping Line Multiple Instance Learning Paradigm for Interactive Image Segmentation

no code implementations • CVPR 2014 • Jiajun Wu, Yibiao Zhao, Jun-Yan Zhu, Siwei Luo, Zhuowen Tu

Interactive segmentation, in which a user provides a bounding box to an object of interest for image segmentation, has been applied to a variety of applications in image editing, crowdsourcing, computer vision, and medical imaging.