Search Results for author: Junlin Xie

Found 5 papers, 2 papers with code

Large Multimodal Agents: A Survey

no code implementations • 23 Feb 2024 • Junlin Xie, Zhihong Chen, Ruifei Zhang, Xiang Wan, Guanbin Li

In this paper, we conduct a systematic review of LLM-driven multimodal agents, which we refer to as large multimodal agents ( LMAs for short).

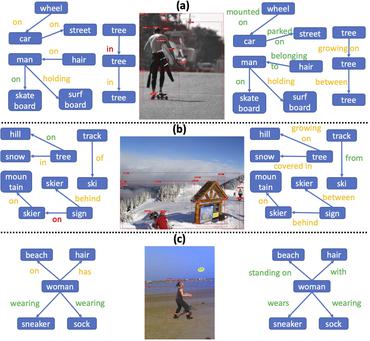

Generalized Unbiased Scene Graph Generation

no code implementations • 9 Aug 2023 • Xinyu Lyu, Lianli Gao, Junlin Xie, Pengpeng Zeng, Yulu Tian, Jie Shao, Heng Tao Shen

To the end, we propose the Multi-Concept Learning (MCL) framework, which ensures a balanced learning process across rare/ uncommon/ common concepts.

Dilated Context Integrated Network with Cross-Modal Consensus for Temporal Emotion Localization in Videos

1 code implementation • 3 Aug 2022 • Juncheng Li, Junlin Xie, Linchao Zhu, Long Qian, Siliang Tang, Wenqiao Zhang, Haochen Shi, Shengyu Zhang, Longhui Wei, Qi Tian, Yueting Zhuang

In this paper, we introduce a new task, named Temporal Emotion Localization in videos~(TEL), which aims to detect human emotions and localize their corresponding temporal boundaries in untrimmed videos with aligned subtitles.

KinD-LCE Curve Estimation And Retinex Fusion On Low-Light Image

no code implementations • 19 Jul 2022 • Xiaochun Lei, Weiliang Mai, Junlin Xie, He Liu, Zetao Jiang, Zhaoting Gong, Chang Lu, Linjun Lu

The proposed method, KinD-LCE, uses a light curve estimation module to enhance the illumination map in the Retinex decomposed image, improving the overall image brightness.

Compositional Temporal Grounding with Structured Variational Cross-Graph Correspondence Learning

1 code implementation • CVPR 2022 • Juncheng Li, Junlin Xie, Long Qian, Linchao Zhu, Siliang Tang, Fei Wu, Yi Yang, Yueting Zhuang, Xin Eric Wang

To systematically measure the compositional generalizability of temporal grounding models, we introduce a new Compositional Temporal Grounding task and construct two new dataset splits, i. e., Charades-CG and ActivityNet-CG.