Search Results for author: Karsten Roscher

Found 9 papers, 3 papers with code

Finding Dino: A plug-and-play framework for unsupervised detection of out-of-distribution objects using prototypes

no code implementations • 11 Apr 2024 • Poulami Sinhamahapatra, Franziska Schwaiger, Shirsha Bose, Huiyu Wang, Karsten Roscher, Stephan Guennemann

It is an inference-based method that does not require training on the domain dataset and relies on extracting relevant features from self-supervised pre-trained models.

Concept-Guided LLM Agents for Human-AI Safety Codesign

no code implementations • 3 Apr 2024 • Florian Geissler, Karsten Roscher, Mario Trapp

Generative AI is increasingly important in software engineering, including safety engineering, where its use ensures that software does not cause harm to people.

Enhancing Interpretability of Vertebrae Fracture Grading using Human-interpretable Prototypes

no code implementations • 3 Apr 2024 • Poulami Sinhamahapatra, Suprosanna Shit, Anjany Sekuboyina, Malek Husseini, David Schinz, Nicolas Lenhart, Joern Menze, Jan Kirschke, Karsten Roscher, Stephan Guennemann

In this work, we propose a novel interpretable-by-design method, ProtoVerse, to find relevant sub-parts of vertebral fractures (prototypes) that reliably explain the model's decision in a human-understandable way.

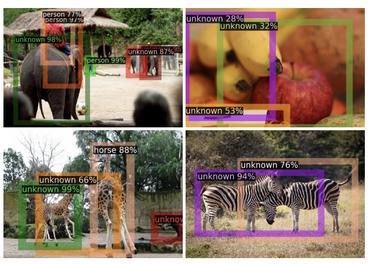

Preventing Errors in Person Detection: A Part-Based Self-Monitoring Framework

1 code implementation • 10 Jul 2023 • Franziska Schwaiger, Andrea Matic, Karsten Roscher, Stephan Günnemann

The ability to detect learned objects regardless of their appearance is crucial for autonomous systems in real-world applications.

Diffusion Denoised Smoothing for Certified and Adversarial Robust Out-Of-Distribution Detection

1 code implementation • 27 Mar 2023 • Nicola Franco, Daniel Korth, Jeanette Miriam Lorenz, Karsten Roscher, Stephan Guennemann

As the use of machine learning continues to expand, the importance of ensuring its safety cannot be overstated.

Towards Human-Interpretable Prototypes for Visual Assessment of Image Classification Models

no code implementations • 22 Nov 2022 • Poulami Sinhamahapatra, Lena Heidemann, Maureen Monnet, Karsten Roscher

Explaining black-box Artificial Intelligence (AI) models is a cornerstone for trustworthy AI and a prerequisite for its use in safety critical applications such that AI models can reliably assist humans in critical decisions.

Is it all a cluster game? -- Exploring Out-of-Distribution Detection based on Clustering in the Embedding Space

no code implementations • 16 Mar 2022 • Poulami Sinhamahapatra, Rajat Koner, Karsten Roscher, Stephan Günnemann

It is essential for safety-critical applications of deep neural networks to determine when new inputs are significantly different from the training distribution.

OODformer: Out-Of-Distribution Detection Transformer

1 code implementation • 19 Jul 2021 • Rajat Koner, Poulami Sinhamahapatra, Karsten Roscher, Stephan Günnemann, Volker Tresp

A serious problem in image classification is that a trained model might perform well for input data that originates from the same distribution as the data available for model training, but performs much worse for out-of-distribution (OOD) samples.

From Black-box to White-box: Examining Confidence Calibration under different Conditions

no code implementations • 8 Jan 2021 • Franziska Schwaiger, Maximilian Henne, Fabian Küppers, Felippe Schmoeller Roza, Karsten Roscher, Anselm Haselhoff

Based on previous work, we study the miscalibration of object detection models with respect to image location and box scale.