Search Results for author: Wenting Zhao

Found 34 papers, 9 papers with code

Enhancing Multiple-choice Machine Reading Comprehension by Punishing Illogical Interpretations

no code implementations • EMNLP 2021 • Yiming Ju, Yuanzhe Zhang, Zhixing Tian, Kang Liu, Xiaohuan Cao, Wenting Zhao, Jinlong Li, Jun Zhao

Multiple-choice MRC is one of the most studied tasks in MRC due to the convenience of evaluation and the flexibility of answer format.

Deep Reasoning Networks for Unsupervised Pattern De-mixing with Constraint Reasoning

no code implementations • ICML 2020 • Di Chen, Yiwei Bai, Wenting Zhao, Sebastian Ament, John Gregoire, Carla Gomes

We introduce Deep Reasoning Networks (DRNets), an end-to-end framework that combines deep learning with constraint reasoning for solving pattern de-mixing problems, typically in an unsupervised or very-weakly-supervised setting.

WildChat: 1M ChatGPT Interaction Logs in the Wild

no code implementations • 2 May 2024 • Wenting Zhao, Xiang Ren, Jack Hessel, Claire Cardie, Yejin Choi, Yuntian Deng

In addition to timestamped chat transcripts, we enrich the dataset with demographic data, including state, country, and hashed IP addresses, alongside request headers.

kNN-ICL: Compositional Task-Oriented Parsing Generalization with Nearest Neighbor In-Context Learning

no code implementations • 17 Dec 2023 • Wenting Zhao, Ye Liu, Yao Wan, Yibo Wang, Qingyang Wu, Zhongfen Deng, Jiangshu Du, Shuaiqi Liu, Yunlong Xu, Philip S. Yu

Task-Oriented Parsing (TOP) enables conversational assistants to interpret user commands expressed in natural language, transforming them into structured outputs that combine elements of both natural language and intent/slot tags.

Language Model Inversion

2 code implementations • 22 Nov 2023 • John X. Morris, Wenting Zhao, Justin T. Chiu, Vitaly Shmatikov, Alexander M. Rush

We consider the problem of language model inversion and show that next-token probabilities contain a surprising amount of information about the preceding text.

UNcommonsense Reasoning: Abductive Reasoning about Uncommon Situations

no code implementations • 14 Nov 2023 • Wenting Zhao, Justin T Chiu, Jena D. Hwang, Faeze Brahman, Jack Hessel, Sanjiban Choudhury, Yejin Choi, Xiang Lorraine Li, Alane Suhr

To instead investigate the ability to model unusual, unexpected, and unlikely situations, we explore the task of uncommonsense abductive reasoning.

In Search of the Long-Tail: Systematic Generation of Long-Tail Inferential Knowledge via Logical Rule Guided Search

1 code implementation • 13 Nov 2023 • Huihan Li, Yuting Ning, Zeyi Liao, Siyuan Wang, Xiang Lorraine Li, Ximing Lu, Wenting Zhao, Faeze Brahman, Yejin Choi, Xiang Ren

We further use the data generated by LINK to construct a dataset Logic-Induced-Long-Tail (LINT) that can be used to evaluate downstream models on the long-tail distribution; LINT contains 108K knowledge statements spanning four domains.

JPAVE: A Generation and Classification-based Model for Joint Product Attribute Prediction and Value Extraction

1 code implementation • 7 Nov 2023 • Zhongfen Deng, Hao Peng, Tao Zhang, Shuaiqi Liu, Wenting Zhao, Yibo Wang, Philip S. Yu

Furthermore, the copy mechanism in value generator and the value attention module in value classifier help our model address the data discrepancy issue by only focusing on the relevant part of input text and ignoring other information which causes the discrepancy issue such as sentence structure in the text.

Aspect-based Meeting Transcript Summarization: A Two-Stage Approach with Weak Supervision on Sentence Classification

no code implementations • 7 Nov 2023 • Zhongfen Deng, Seunghyun Yoon, Trung Bui, Franck Dernoncourt, Quan Hung Tran, Shuaiqi Liu, Wenting Zhao, Tao Zhang, Yibo Wang, Philip S. Yu

Then we merge the sentences selected for a specific aspect as the input for the summarizer to produce the aspect-based summary.

DIVKNOWQA: Assessing the Reasoning Ability of LLMs via Open-Domain Question Answering over Knowledge Base and Text

no code implementations • 31 Oct 2023 • Wenting Zhao, Ye Liu, Tong Niu, Yao Wan, Philip S. Yu, Shafiq Joty, Yingbo Zhou, Semih Yavuz

Moreover, a significant gap in the current landscape is the absence of a realistic benchmark for evaluating the effectiveness of grounding LLMs on heterogeneous knowledge sources (e. g., knowledge base and text).

Symbolic Planning and Code Generation for Grounded Dialogue

1 code implementation • 26 Oct 2023 • Justin T. Chiu, Wenting Zhao, Derek Chen, Saujas Vaduguru, Alexander M. Rush, Daniel Fried

Large language models (LLMs) excel at processing and generating both text and code.

Named Entity Recognition via Machine Reading Comprehension: A Multi-Task Learning Approach

1 code implementation • 20 Sep 2023 • Yibo Wang, Wenting Zhao, Yao Wan, Zhongfen Deng, Philip S. Yu

In this paper, we propose to incorporate the label dependencies among entity types into a multi-task learning framework for better MRC-based NER.

Localize, Retrieve and Fuse: A Generalized Framework for Free-Form Question Answering over Tables

no code implementations • 20 Sep 2023 • Wenting Zhao, Ye Liu, Yao Wan, Yibo Wang, Zhongfen Deng, Philip S. Yu

Furthermore, TAG-QA outperforms the end-to-end model T5 by 16% and 12% on BLEU-4 and PARENT F-score, respectively.

Self-Calibrated Cross Attention Network for Few-Shot Segmentation

1 code implementation • ICCV 2023 • Qianxiong Xu, Wenting Zhao, Guosheng Lin, Cheng Long

Moreover, when calculating SCCA, we design a scaled-cosine mechanism to better utilize the support features for similarity calculation.

Ranked #8 on

Few-Shot Semantic Segmentation

on COCO-20i (5-shot)

Ranked #8 on

Few-Shot Semantic Segmentation

on COCO-20i (5-shot)

Click-Conversion Multi-Task Model with Position Bias Mitigation for Sponsored Search in eCommerce

no code implementations • 29 Jul 2023 • Yibo Wang, Yanbing Xue, Bo Liu, Musen Wen, Wenting Zhao, Stephen Guo, Philip S. Yu

Position bias, the phenomenon whereby users tend to focus on higher-ranked items of the search result list regardless of the actual relevance to queries, is prevailing in many ranking systems.

Structure-Sensitive Graph Dictionary Embedding for Graph Classification

no code implementations • 18 Jun 2023 • Guangbu Liu, Tong Zhang, Xudong Wang, Wenting Zhao, Chuanwei Zhou, Zhen Cui

Instead of a plain use of a base graph dictionary, we propose the variational graph dictionary adaptation (VGDA) to generate a personalized dictionary (named adapted graph dictionary) for catering to each input graph.

Abductive Commonsense Reasoning Exploiting Mutually Exclusive Explanations

no code implementations • 24 May 2023 • Wenting Zhao, Justin T. Chiu, Claire Cardie, Alexander M. Rush

Instead of using direct supervision, this work proposes an approach for abductive commonsense reasoning that exploits the fact that only a subset of explanations is correct for a given context.

HOP, UNION, GENERATE: Explainable Multi-hop Reasoning without Rationale Supervision

no code implementations • 23 May 2023 • Wenting Zhao, Justin T. Chiu, Claire Cardie, Alexander M. Rush

Explainable multi-hop question answering (QA) not only predicts answers but also identifies rationales, i. e. subsets of input sentences used to derive the answers.

Serenity: Library Based Python Code Analysis for Code Completion and Automated Machine Learning

no code implementations • 5 Jan 2023 • Wenting Zhao, Ibrahim Abdelaziz, Julian Dolby, Kavitha Srinivas, Mossad Helali, Essam Mansour

We demonstrate the efficiency and usefulness of Serenity's analysis in two applications: code completion and automated machine learning.

Compositional Task-Oriented Parsing as Abstractive Question Answering

1 code implementation • NAACL 2022 • Wenting Zhao, Konstantine Arkoudas, Weiqi Sun, Claire Cardie

Task-oriented parsing (TOP) aims to convert natural language into machine-readable representations of specific tasks, such as setting an alarm.

Sentiment Word Aware Multimodal Refinement for Multimodal Sentiment Analysis with ASR Errors

1 code implementation • Findings (ACL) 2022 • Yang Wu, Yanyan Zhao, Hao Yang, Song Chen, Bing Qin, Xiaohuan Cao, Wenting Zhao

Through further analysis of the ASR outputs, we find that in some cases the sentiment words, the key sentiment elements in the textual modality, are recognized as other words, which makes the sentiment of the text change and hurts the performance of multimodal sentiment models directly.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+4

Automatic Speech Recognition (ASR)

+4

Attend, Memorize and Generate: Towards Faithful Table-to-Text Generation in Few Shots

1 code implementation • Findings (EMNLP) 2021 • Wenting Zhao, Ye Liu, Yao Wan, Philip S. Yu

Few-shot table-to-text generation is a task of composing fluent and faithful sentences to convey table content using limited data.

Automating Crystal-Structure Phase Mapping: Combining Deep Learning with Constraint Reasoning

no code implementations • 21 Aug 2021 • Di Chen, Yiwei Bai, Sebastian Ament, Wenting Zhao, Dan Guevarra, Lan Zhou, Bart Selman, R. Bruce van Dover, John M. Gregoire, Carla P. Gomes

DRNets compensate for the limited data by exploiting and magnifying the rich prior knowledge about the thermodynamic rules governing the mixtures of crystals with constraint reasoning seamlessly integrated into neural network optimization.

Enriching Non-Autoregressive Transformer with Syntactic and Semantic Structures for Neural Machine Translation

no code implementations • EACL 2021 • Ye Liu, Yao Wan, JianGuo Zhang, Wenting Zhao, Philip Yu

In this paper, we claim that the syntactic and semantic structures among natural language are critical for non-autoregressive machine translation and can further improve the performance.

HOT-VAE: Learning High-Order Label Correlation for Multi-Label Classification via Attention-Based Variational Autoencoders

no code implementations • 9 Mar 2021 • Wenting Zhao, Shufeng Kong, Junwen Bai, Daniel Fink, Carla Gomes

This in turn leads to a challenging and long-standing problem in the field of computer science - how to perform ac-curate multi-label classification with hundreds of labels?

Evaluating Multi-label Classifiers with Noisy Labels

no code implementations • 16 Feb 2021 • Wenting Zhao, Carla Gomes

In the real world, it is more common to deal with noisy datasets than clean datasets, given how modern datasets are labeled by a large group of annotators on crowdsourcing platforms, but little attention has been given to evaluating multi-label classifiers with noisy labels.

Zero Training Overhead Portfolios for Learning to Solve Combinatorial Problems

no code implementations • 5 Feb 2021 • Yiwei Bai, Wenting Zhao, Carla P. Gomes

There has been an increasing interest in harnessing deep learning to tackle combinatorial optimization (CO) problems in recent years.

Enriching Non-Autoregressive Transformer with Syntactic and SemanticStructures for Neural Machine Translation

no code implementations • 22 Jan 2021 • Ye Liu, Yao Wan, Jian-Guo Zhang, Wenting Zhao, Philip S. Yu

In this paper, we claim that the syntactic and semantic structures among natural language are critical for non-autoregressive machine translation and can further improve the performance.

Graph Deformer Network

no code implementations • 1 Jan 2021 • Wenting Zhao, Yuan Fang, Zhen Cui, Tong Zhang, Jian Yang, Wei Liu

In this paper, we propose a simple yet effective graph deformer network (GDN) to fulfill anisotropic convolution filtering on graphs, analogous to the standard convolution operation on images.

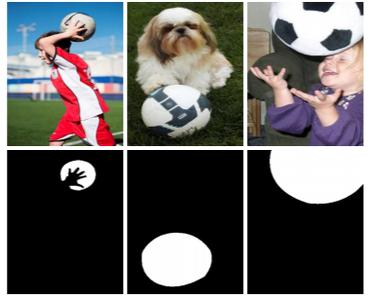

Co-Saliency Detection with Co-Attention Fully Convolutional Network

no code implementations • 20 Aug 2020 • Guangshuai Gao, Wenting Zhao, Qingjie Liu, Yunhong Wang

Co-saliency detection aims to detect common salient objects from a group of relevant images.

Dual-Attention Graph Convolutional Network

no code implementations • 28 Nov 2019 • Xueya Zhang, Tong Zhang, Wenting Zhao, Zhen Cui, Jian Yang

Graph convolutional networks (GCNs) have shown the powerful ability in text structure representation and effectively facilitate the task of text classification.

Deep Reasoning Networks: Thinking Fast and Slow, for Pattern De-mixing

no code implementations • 25 Sep 2019 • Di Chen, Yiwei Bai, Wenting Zhao, Sebastian Ament, John M. Gregoire, Carla P. Gomes

We introduce Deep Reasoning Networks (DRNets), an end-to-end framework that combines deep learning with reasoning for solving pattern de-mixing problems, typically in an unsupervised or weakly-supervised setting.

Deep Reasoning Networks: Thinking Fast and Slow

no code implementations • 3 Jun 2019 • Di Chen, Yiwei Bai, Wenting Zhao, Sebastian Ament, John M. Gregoire, Carla P. Gomes

At a high level, DRNets encode a structured latent space of the input data, which is constrained to adhere to prior knowledge by a reasoning module.

When Work Matters: Transforming Classical Network Structures to Graph CNN

no code implementations • 7 Jul 2018 • Wenting Zhao, Chunyan Xu, Zhen Cui, Tong Zhang, Jiatao Jiang, Zhen-Yu Zhang, Jian Yang

In this paper, we aim to give a comprehensive analysis of when work matters by transforming different classical network structures to graph CNN, particularly in the basic graph recognition problem.

Ranked #3 on

Graph Classification

on IMDb-B

Ranked #3 on

Graph Classification

on IMDb-B