Loss Functions

Loss Functions

Self-Adjusting Smooth L1 Loss

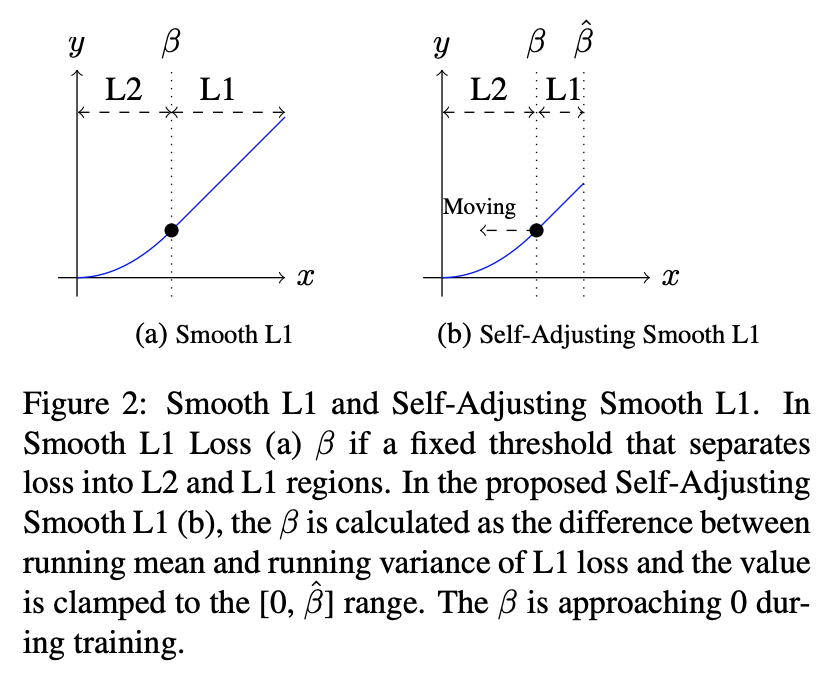

Introduced by Fu et al. in RetinaMask: Learning to predict masks improves state-of-the-art single-shot detection for freeSelf-Adjusting Smooth L1 Loss is a loss function used in object detection that was introduced with RetinaMask. This is an improved version of Smooth L1. For Smooth L1 loss we have:

$$ f(x) = 0.5 \frac{x^{2}}{\beta} \text{ if } |x| < \beta $$ $$ f(x) = |x| -0.5\beta \text{ otherwise } $$

Here a point $\beta$ splits the positive axis range into two parts: $L2$ loss is used for targets in range $[0, \beta]$, and $L1$ loss is used beyond $\beta$ to avoid over-penalizing utliers. The overall function is smooth (continuous, together with its derivative). However, the choice of control point ($\beta$) is heuristic and is usually done by hyper parameter search.

Instead, with self-adjusting smooth L1 loss, inside the loss function the running mean and variance of the absolute loss are recorded. We use the running minibatch mean and variance with a momentum of $0.9$ to update these two parameters.

Source: RetinaMask: Learning to predict masks improves state-of-the-art single-shot detection for freePapers

| Paper | Code | Results | Date | Stars |

|---|

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

| 🤖 No Components Found | You can add them if they exist; e.g. Mask R-CNN uses RoIAlign |