CuMo: Scaling Multimodal LLM with Co-Upcycled Mixture-of-Experts

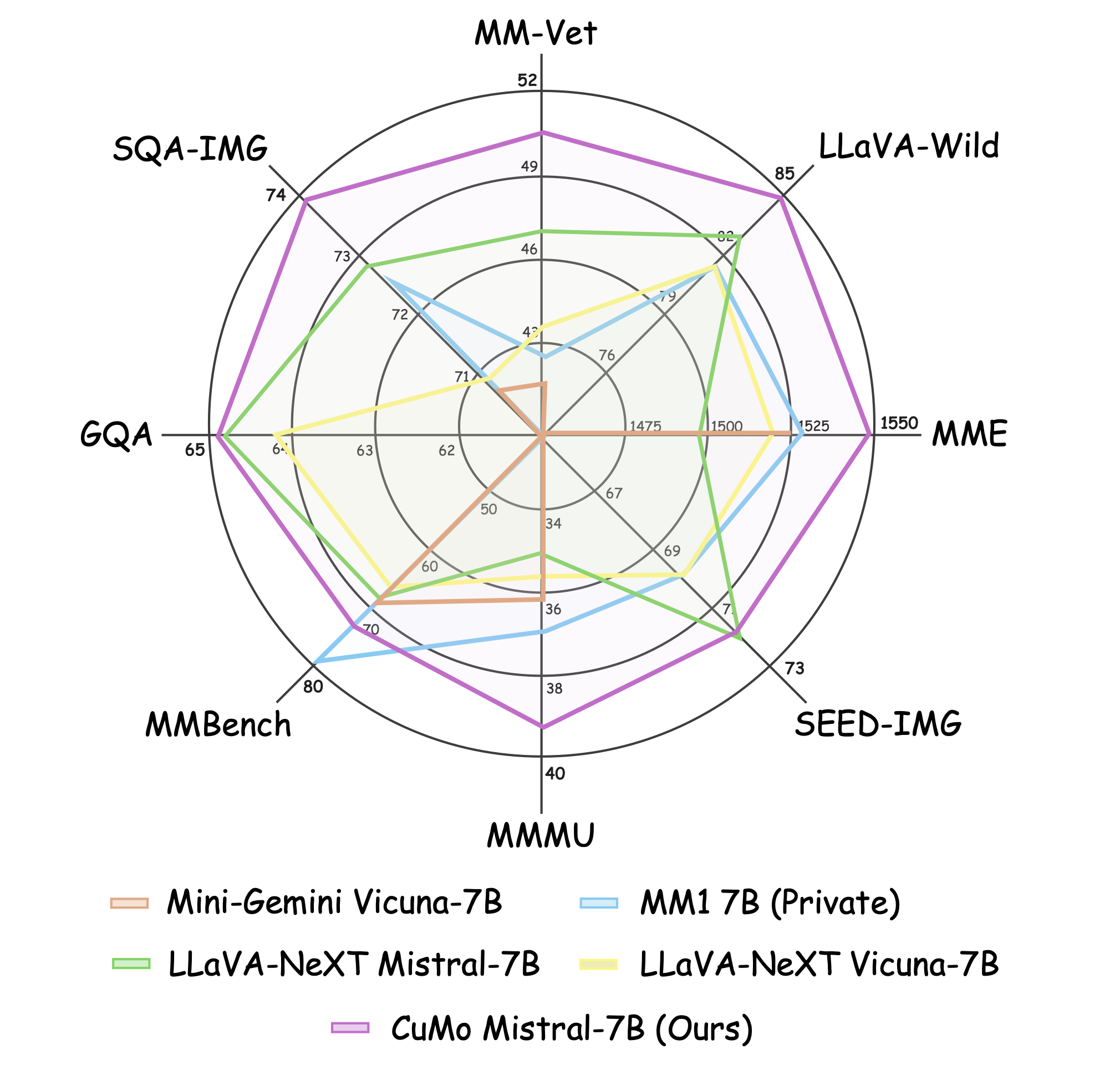

Recent advancements in Multimodal Large Language Models (LLMs) have focused primarily on scaling by increasing text-image pair data and enhancing LLMs to improve performance on multimodal tasks. However, these scaling approaches are computationally expensive and overlook the significance of improving model capabilities from the vision side. Inspired by the successful applications of Mixture-of-Experts (MoE) in LLMs, which improves model scalability during training while keeping inference costs similar to those of smaller models, we propose CuMo. CuMo incorporates Co-upcycled Top-K sparsely-gated Mixture-of-experts blocks into both the vision encoder and the MLP connector, thereby enhancing the multimodal LLMs with minimal additional activated parameters during inference. CuMo first pre-trains the MLP blocks and then initializes each expert in the MoE block from the pre-trained MLP block during the visual instruction tuning stage. Auxiliary losses are used to ensure a balanced loading of experts. CuMo outperforms state-of-the-art multimodal LLMs across various VQA and visual-instruction-following benchmarks using models within each model size group, all while training exclusively on open-sourced datasets. The code and model weights for CuMo are open-sourced at https://github.com/SHI-Labs/CuMo.

PDF AbstractCode

Results from the Paper

Ranked #1 on

Visual Question Answering

on MMBench

(GPT-3.5 score metric)

Ranked #1 on

Visual Question Answering

on MMBench

(GPT-3.5 score metric)

GQA

GQA

Visual Question Answering v2.0

Visual Question Answering v2.0

TextVQA

TextVQA

ScienceQA

ScienceQA

MMBench

MMBench

MM-Vet

MM-Vet

SEED-Bench

SEED-Bench

MathVista

MathVista

COST

COST