Estimating Graph Dimension with Cross-validated Eigenvalues

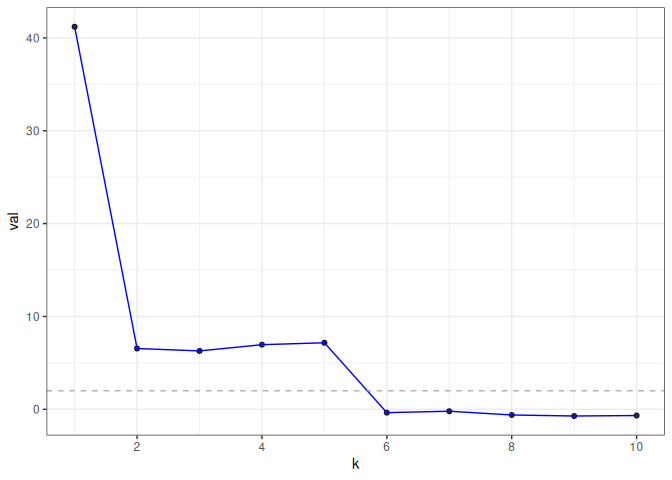

In applied multivariate statistics, estimating the number of latent dimensions or the number of clusters is a fundamental and recurring problem. One common diagnostic is the scree plot, which shows the largest eigenvalues of the data matrix; the user searches for a "gap" or "elbow" in the decreasing eigenvalues; unfortunately, these patterns can hide beneath the bias of the sample eigenvalues. This methodological problem is conceptually difficult because, in many situations, there is only enough signal to detect a subset of the $k$ population dimensions/eigenvectors. In this situation, one could argue that the correct choice of $k$ is the number of detectable dimensions. We alleviate these problems with cross-validated eigenvalues. Under a large class of random graph models, without any parametric assumptions, we provide a p-value for each sample eigenvector. It tests the null hypothesis that this sample eigenvector is orthogonal to (i.e., uncorrelated with) the true latent dimensions. This approach naturally adapts to problems where some dimensions are not statistically detectable. In scenarios where all $k$ dimensions can be estimated, we prove that our procedure consistently estimates $k$. In simulations and a data example, the proposed estimator compares favorably to alternative approaches in both computational and statistical performance.

PDF Abstract