Hierarchical Sketch Induction for Paraphrase Generation

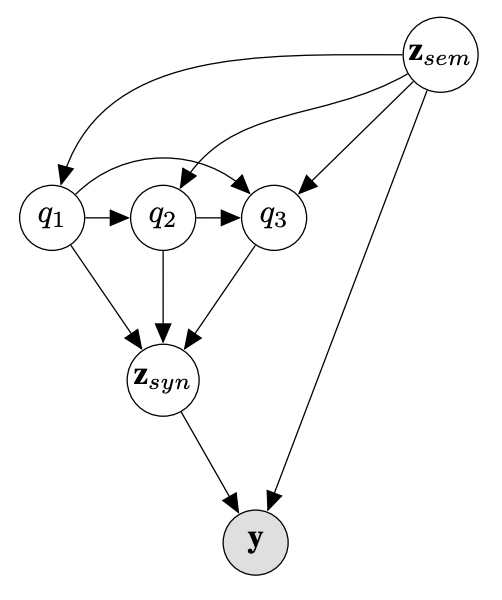

We propose a generative model of paraphrase generation, that encourages syntactic diversity by conditioning on an explicit syntactic sketch. We introduce Hierarchical Refinement Quantized Variational Autoencoders (HRQ-VAE), a method for learning decompositions of dense encodings as a sequence of discrete latent variables that make iterative refinements of increasing granularity. This hierarchy of codes is learned through end-to-end training, and represents fine-to-coarse grained information about the input. We use HRQ-VAE to encode the syntactic form of an input sentence as a path through the hierarchy, allowing us to more easily predict syntactic sketches at test time. Extensive experiments, including a human evaluation, confirm that HRQ-VAE learns a hierarchical representation of the input space, and generates paraphrases of higher quality than previous systems.

PDF Abstract ACL 2022 PDF ACL 2022 AbstractCode

Datasets

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Paraphrase Generation | MSCOCO | HRQ-VAE | iBLEU | 19.04 | # 1 | |

| BLEU | 27.90 | # 1 | ||||

| Paraphrase Generation | Paralex | HRQ-VAE | iBLEU | 24.93 | # 1 | |

| BLEU | 39.49 | # 1 | ||||

| Paraphrase Generation | Quora Question Pairs | HRQ-VAE | iBLEU | 18.42 | # 1 | |

| BLEU | 33.11 | # 1 |

MS COCO

MS COCO

Quora Question Pairs

Quora Question Pairs