Multimodal Chain-of-Thought Reasoning in Language Models

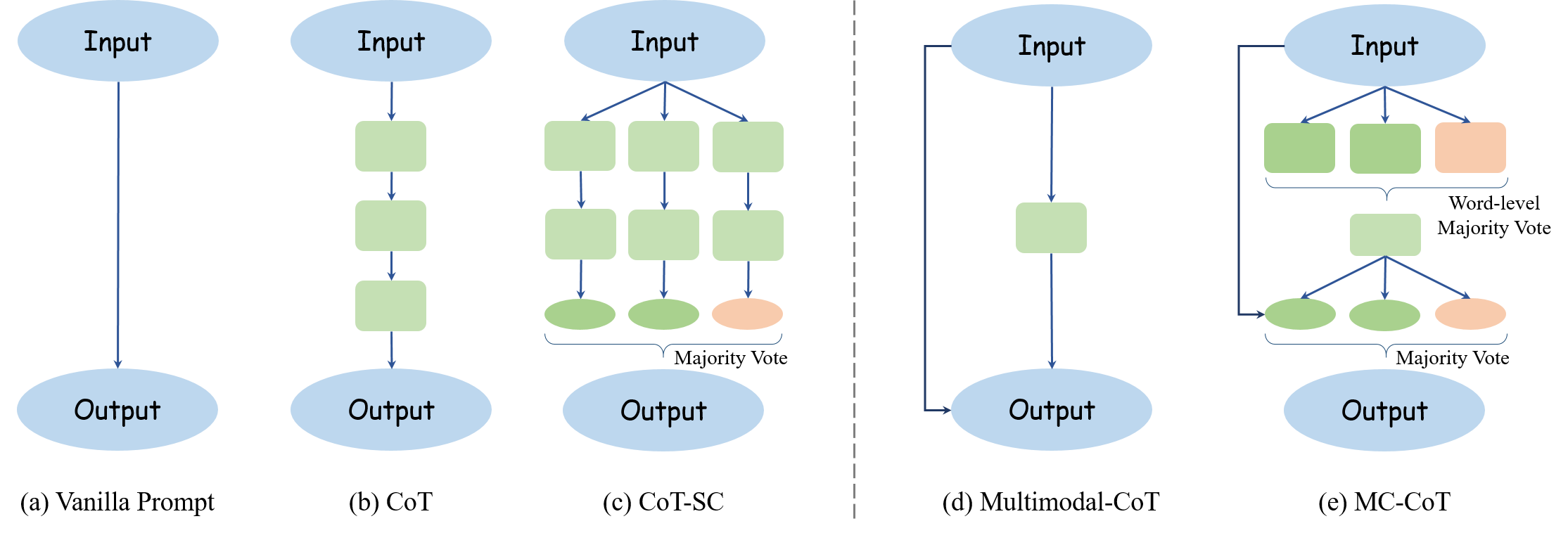

Large language models (LLMs) have shown impressive performance on complex reasoning by leveraging chain-of-thought (CoT) prompting to generate intermediate reasoning chains as the rationale to infer the answer. However, existing CoT studies have primarily focused on the language modality. We propose Multimodal-CoT that incorporates language (text) and vision (images) modalities into a two-stage framework that separates rationale generation and answer inference. In this way, answer inference can leverage better generated rationales that are based on multimodal information. Experimental results on ScienceQA and A-OKVQA benchmark datasets show the effectiveness of our proposed approach. With Multimodal-CoT, our model under 1 billion parameters achieves state-of-the-art performance on the ScienceQA benchmark. Our analysis indicates that Multimodal-CoT offers the advantages of mitigating hallucination and enhancing convergence speed. Code is publicly available at https://github.com/amazon-science/mm-cot.

PDF AbstractCode

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Science Question Answering | ScienceQA | Multimodal CoT | Natural Science | 95.91 | # 2 | |

| Social Science | 82.00 | # 4 | ||||

| Language Science | 90.82 | # 3 | ||||

| Text Context | 95.26 | # 2 | ||||

| Image Context | 88.80 | # 3 | ||||

| No Context | 92.89 | # 3 | ||||

| Grades 1-6 | 92.44 | # 3 | ||||

| Grades 7-12 | 90.31 | # 4 | ||||

| Avg. Accuracy | 91.68 | # 4 |

ScienceQA

ScienceQA

A-OKVQA

A-OKVQA